Google is always updating its search algorithms, but it’s rare for the company to publish information about what data they use to determine search engine page rankings (SERPs). This year, the industry giant publicly announced a new ranking factor: Core Web Vitals.

The decision to make this information public begs two important questions: what are Core Web Vitals, and why are they so important? Web developers can read on to find out what they need to know.

What Are Core Web Vitals?

Google’s Web Vitals initiative is intended to simplify webmasters’ interactions with Page Experience metrics. Instead of playing guessing games about how their pages are performing according to these metrics, site owners can now use Google tools to get a better understanding of the most important metrics. Those are the ones the company refers to as Core Web Vitals.

Each Core Web Vital can be viewed as a stand-in for a measurable aspect of real-world user experience. It’s joining other Page Experience signals such as HTTPS, mobile-friendliness, and intrusive interstitials to give web designers one more benchmark for success.

Evolving Metrics

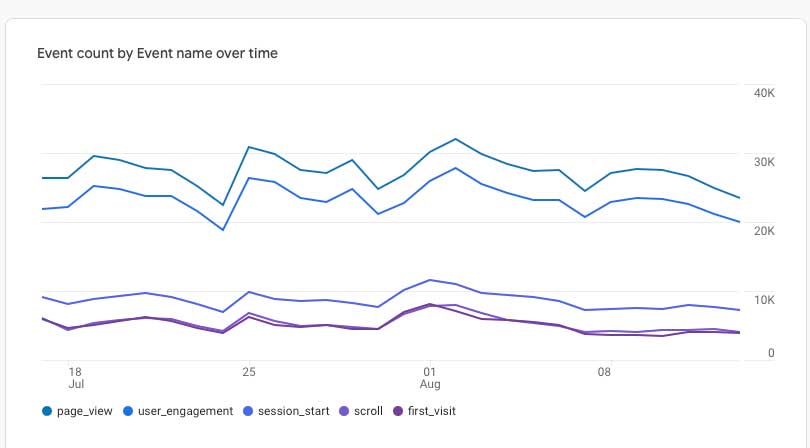

These new Page Experience metrics are already incorporated into the suite of Google Analytics tools, but it’s just the beginning. Google only recently developed the Web Vitals interface, and the company plans to make changes over time. In 2020, Core Web Vitals focus only on three key factors affecting user experience. In 2021, the company plans to roll out more changes.

What Are the Most Important Core Web Vitals for 2021?

There are three essential Core Web Vitals metrics for 2020. They measure overall load performance, interactivity, and visual stability. Web designers can use Google tools to evaluate their progress toward recommended targets for each of these metrics. For now, aiming for the 75th percentile is a good goal for most site owners.

Largest Contentful Paint

The Largest Contentful Paint (LCP) Core Web Vital measures loading performance. This metric should come as no surprise to experienced web designers since it’s common knowledge in the industry that load times affect user experience.

In 2020, pages with 2.5 second or faster loading times are considered to be performing well in this arena. Pages that take 2.5 to 4 seconds to load need improvement. If the page takes longer than 4 seconds to load, it will perform poorly according to this metric.

First Input Delay

First Input Delay (FID) offers a measurement of a page’s interactivity. When page visitors click on buttons or JavaScript events, the browser starts to process the interaction and produce appropriate results. FID can also be viewed as a measure of how fast the browser can do that.

Aim for an FID of 100 ms or less. If a page has an FID of 100 to 300 ms, it needs improvement. At 300 ms or less, it needs serious help. Factors like the quality of JavaScript and third-party code can influence FID.

Cumulative Layout Shift

The Cumulative Layout Shift (CLS) Core Web Vital measures visual stability. It answers the question, how fast are the page’s stable speeds? If a page loads quickly, but users can’t click on buttons or follow links without causing unexpected shifts in the page layout, that’s a problem with CLS.

The top factor influencing CLS is image size definition. Animations and other on-page content can also affect the page’s visual stability. A well-designed page with all its image sizes and animations defined in HTML should have a CLS of 0.1 or less. Pages with a CLS between 0.1 and 0.25 need improvement. A CLS higher than 0.25 will cause poor SERP performance.

Why Are Core Web Vitals Important?

Core Web Vitals will influence all search results on Google. They’re also going to play a large role in determining what pages appear in Google Top Stories, the news results that users sometimes see at the top of their results pages.

Until now, AMP was the most important requirement for Google Top Stories. That’s going away. Pages will still need to meet the search engine’s requirements for inclusion into Google News. However, instead of AMP, the pages will now need to meet the minimum thresholds for Web Vitals described above.

How Important Are Core Web Vitals for SERPs?

When it comes to SERPs, Google’s algorithms feature hundreds of ranking signals. Poor performance in Core Web Vitals can make a difference, especially since they have an outsized influence compared to other ranking signals.

That said, Core Web Vital performance isn’t the only factor that will determine rankings. The difference will be most noticeable for websites that already exist in competitive environments.

User Experience

Since Core Web Vitals were designed to quantify Page Experience, they also impact other factors such as click-through rates. Google has performed studies that show how influential these aspects of Page Experience are on user behavior. According to the search engine itself, meeting the thresholds for Core Web Vitals performance makes users 24% less likely to abandon pages.

How to Measure Core Web Vitals

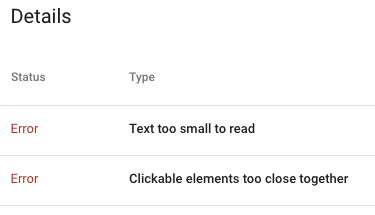

One of the stated purposes of the shift to Core Web Vitals is to make it easier for site owners and web designers to measure performance. As a result, Google has already integrated analytic tools to measure Core Web Vital performance into just about every analytics tool it has. Many third-party companies have also followed suit.

Field Tools

Site owners can use field tools to measure performance. The Chrome User Experience Report is a good place to start. It collects real user data for each signal, removes sensitive user data, and collates it so that site owners can assess real-time performance. Google’s PageSpeed Insights and Search Console tools can also measure all three current Core Web Vitals.

JavaScript Measurements

It’s easy to measure Core Web Vitals in JavaScript. Use the web-vitals JavaScript library to measure performance using standard web APIs. This approach lets web developers measure performance and thresholds accurately by simply calling a single function.

Site owners can also view performance reports without writing code by using the Web Vitals Chrome Extension. The Chrome Extension leverages the web-vitals library to measure key metrics and display them right in the browser. Developers can even measure competitors’ metrics for all three Core Web Vitals.

Lab Metrics

Web developers also have access to two tools that can help site owners measure Core Web Vital performance in lab environments. This allows site owners to test their pages before releasing them and catch potential performance regressions in advance. Both Chrome DevTools and Lighthouse can measure LCP and CLS.

There is currently no way to measure FID in the lab since FID requires user input. However, it is possible to measure Total Blocking Time (TBT). In the pre-release development stages, TBT forms an excellent proxy for FID. Optimizing TBT should also improve FID.

How to Improve Performance

The tools described above help site owners get an idea of what pages are performing well and which have room for improvement. Once they’ve identified problem areas, site owners or web developers can get started optimizing their pages.

Optimizing LCP

There are four primary issues that cause poor LCP performance. They are:

- Slow server responses

- Render-blocking CSS and JavaScript

- Slow resource loading

- Problems with client-side rendering

Resolving these problems should bring most pages back up above the threshold for LCP performance. Developers can optimize servers, route users to different CDNs, cache assets, change how they serve HTML pages, and establish earlier third-party connections.

Optimizing FID

When it comes to poor FID performance, the primary culprit is heavy JavaScript execution. Web developers should focus on optimizing JavaScript to ensure that browsers can respond to user input with sufficient speed. Since JavaScript is executed on the main thread, heavy code will prevent browsers from handling user interactions.

There are four ways to improve JavaScript for FID optimization. Developers can break up longer tasks, use web workers, reduce execution times, and optimize pages for interaction-readiness. When lab-testing the results, bear in mind that TBT can be used as an effective proxy for FID.

Optimizing CLS

Most poor CLS performance is caused by images or embeds that lack defined dimensions. Start by fixing this problem before moving on to addressing other problems. These could include dynamically injected content, Web Fonts that cause FOIT/FOUT, or actions that require network responses prior to updating DOM.

Resolving these problems will ensure that layout shifts will only occur when they are expected. Improving CLS performance to bring the page above the threshold will improve its Core Web Vital scores, especially if these changes are made in conjunction with optimizing FID and LCP.

Final Thoughts on Core Web Vitals

Site owners and web developers who have found themselves frustrated by Google’s lack of transparency in the past should be happy to learn about the switch to Core Web Vitals. Expect these metrics to play an outsized role in Google Top Stories performance and a more moderate role in SERP performance, more generally.

Developers should also expect these metrics to evolve over time as Google continues to implement additional changes. The good news is, the company has indicated that it will continue to keep developers and site owners in the loop as this happens.